A Visualized Self

Ernesto Ramirez

October 2, 2012

Typically when the Quantified Self-er talks about using photography and image capture for self-tracking they’re talking about taking pictures of their food. Pictures are a very powerful way to capture information for better understanding, you know, they are worth a thousand words. On the blog here we’ve also highlighted a few really interesting projects that take the idea of using visual images for tracking and decided to turn the lens around such as Jeff Harris and his 13 years of self portraits.

One of the projects that I found super interesting was LifeSlice by Stan James.

For those of you who want to try LifeSlice Stan has put the code online for you to use and possibly tinker with. As a new user I can say that it is pretty interesting to see how my facial characteristics map to what I’m doing on the computer. For examples here’s me looking at a new statistical software package for mac (Wizard).

And here’s me writing this post while listening at a conference on health data.

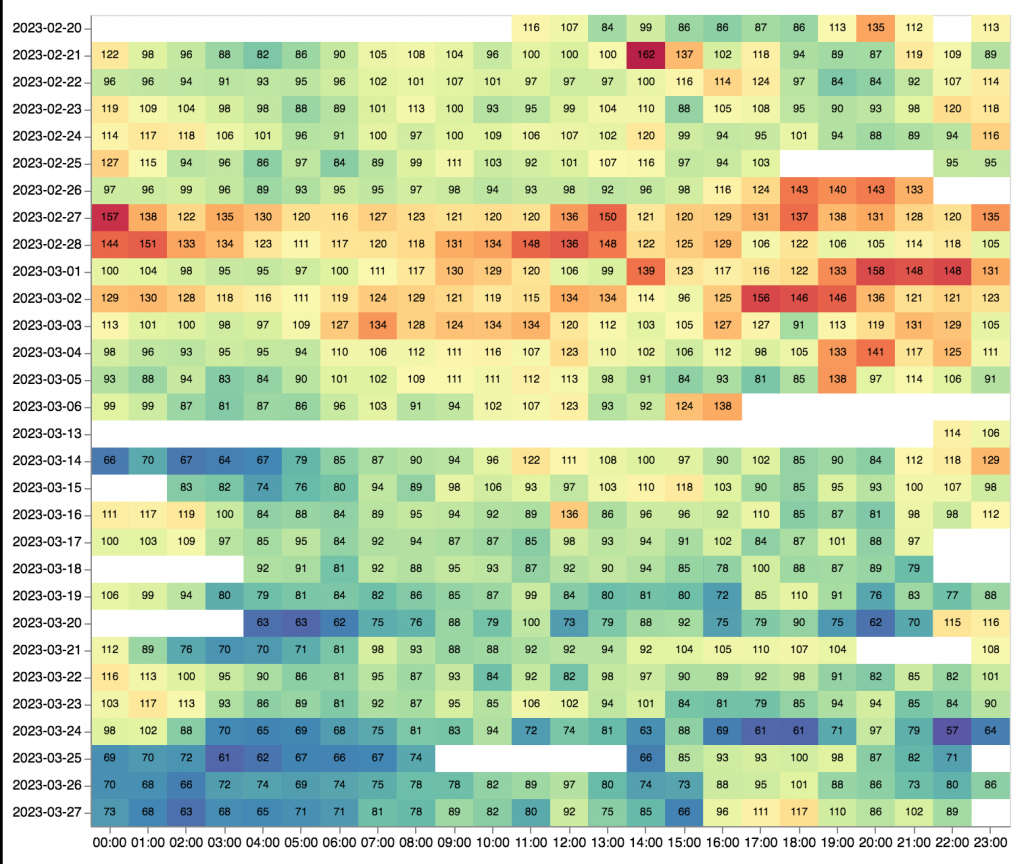

The last project I want to highlight here is the self-portrait project of Noah Kalina. Noah is a photographer who has been taking self portraits every day for 12.5 years (January 11, 2000 – June 20, 2012). A few months ago he put all 4514 images together into one amazingly insightful video.

Than Tibbetts was so intrigued by this project he decided to work some fancy image processing magic to find out what “Average Noah” looked like and found this:

I’m sure there are more projects out there that involve individuals turning the camera on themselves. We all have cameras with us in our pockets and on our computers. How are you using those image capture technologies to better understand yourself? If you’re working on something interesting let us know!