Toolmaker Talk: Yoni Donner (Quantified Mind)

Rajiv Mehta

April 11, 2012

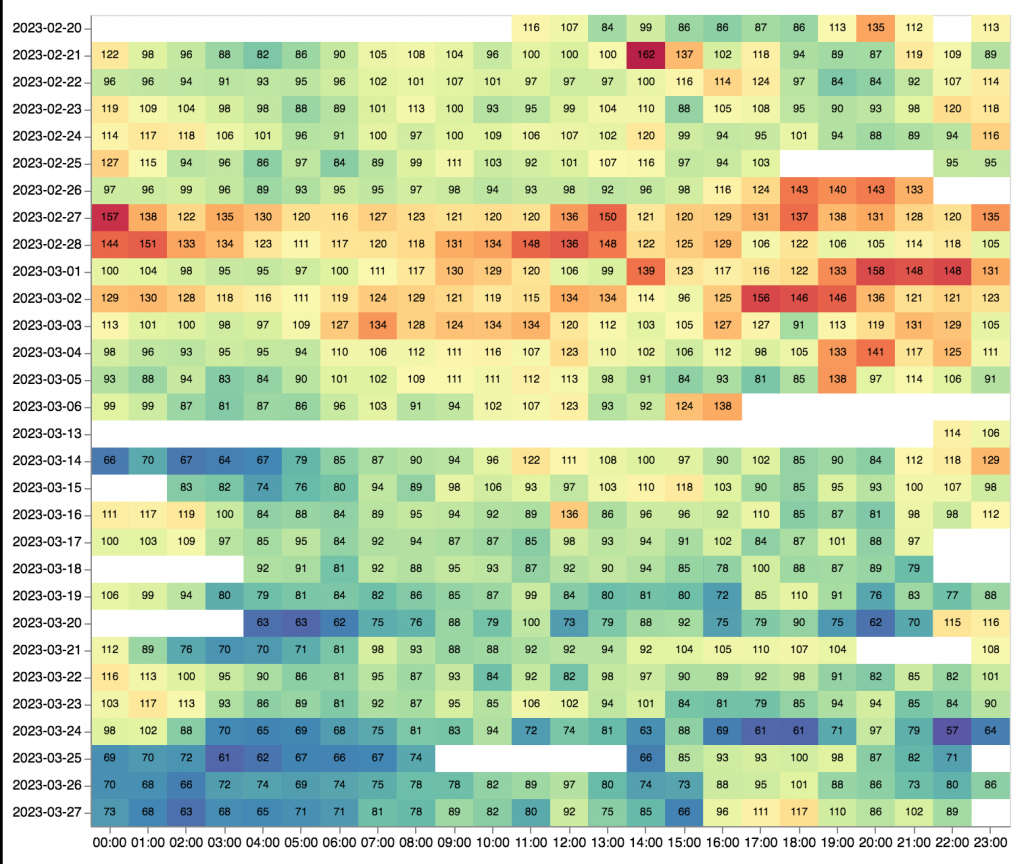

There are ever more widgets to measure our physical selves, but how can we measure how well we’re thinking? Yoni Donner is trying to address this need with Quantified Mind. At a recent Bay Area QS meetup he told us how he used his tool to discover that fasting reduced his mental acuity, which was the opposite of what he had expected. Here he tells us what led to his developing Quantified Mind, and the the difficulties of creating such a tool.

Q: How do you describe Quantified Mind? What is it?

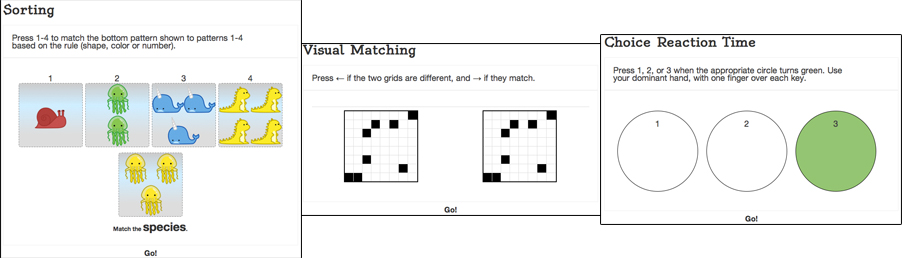

Donner: Quantified Mind is a web application that allows users to track the variation in their cognitive functions under different conditions, using cognitive tests that are based on long-standing principles from psychology, but adapted to be repeatable, short, engaging, automatic and adaptive.

The goal is to make cognitive optimization an exact science instead of relying on subjective feelings, which can be deceiving or so subtle that they are hard to interpret. Quantified Mind allows fun and easy self-experimentation and data analysis that can lead to actionable conclusions.

Q: What’s the back story? What led to it?

Donner: 2-3 years ago I started a discussion group dedicated to meta-optimization. Quickly many suggestions for cognitive improvement came up, and it also became clear that we need to test the hypotheses scientifically to make sense of this huge domain. I then did over a year of pure study of the previous work in measuring cognitive abilities.

I realized that while the existing tests are useful for identifying interindividual differences and detecting pathologies, no solution exists for repeatedly testing the same individual under different conditions, and that I need to collect the psychometric principles that were already established and adapt the tests to the requirements of the new goal: tracking within-person variation in multiple cognitive abilities.

Then there came a long design and planning stage which eventually led me to write a prototype in Python that ran locally. After meeting Nick Winter the real work on making the web application started.

There were many challenges in designing the tests so that they are repeatable and efficient, and trying to minimize practice effects. Much of early stage of the project was spent reading papers and books to identify where I could adapt established tests to my different goals. There was no single formula but one principle that comes up a lot is to change the difficulty of the test dynamically based on the user’s accuracy, to reach a steady state of some fixed accuracy, and apply Bayesian estimation to the parameters of interest. For example, in Digit Span we estimate the level in which the user would get exactly 50% of the trials correct. The reason that our verbal learning test doesn’t use a fixed number of items is that some people would find 10 items too hard and others would find 30 too easy, so any fixed number would waste a lot of their time testing them at an inappropriate level.

We haven’t established validity yet independently from the tests we are based on. This is something that I would very much like to do, but need many test subjects for. In fact, not much is known about the extent to which the intra-individual variance structure resembles the inter-individual structure that has been studied so much. With enough data, we can learn so much!

Now we are at the point where everything is functional, though the UI clearly still needs work. We’ve been live and collecting data for about two months now.

Q: What impact has it had? What have you heard from users?

Donner: People had far more positive reactions than what I dared hope for. I was afraid that people would say it’s too much work because it’s a kind of tracking where you actually need to spend some time on the tracking itself.

We have over 200 users now and almost 100 hours of testing time, though only a small fraction (about 10) are consistently using the site for self-tracking. Feedback was very constructive and I love it when people just share with me interesting things they learned about themselves.

For example, some things people shared with me: butter seems to be individual since one user had a very significant negative effect from just butter, but another had a pretty big positive effect from butter+coffee; piracetam had a small positive effect; 50gr of 85% dark chocolate increased number of errors; lactose and gluten had small negative effects. I love these individual stories but I think that organizing controlled trials will tell us much more. In any case this is just the beginning – we launched very recently, and don’t have much data yet.

Q: What makes it different, sets it apart?

Donner: It is the only cognitive measurement tool that is designed completely for repeated testing and tracking variation over time. It has more tests (over 25 now) than other cognitive testing sites and covers many cognitive domains (processing speed, motor function, inhibition, context switching, attention, verbal and visuospatial learning and working memory, visual and auditory perception and more coming). The data is collected such that everything is stored, not just aggregate statistics, so we can analyze new questions using existing data. We allow queries and statistical analysis of your results through the site itself, and plan to improve these features even more.

I think this combination makes Quantified Mind unique: (1) careful adaptations of many well-known tests and principles from psychological research; (2) multiple domains covered by tests designed to be repeatable, short, adaptive, efficient and reasonably fun; (3) emphasis placed on data collection and analysis.

Q: What are you doing next? How do you see Quantified Mind evolving?

Donner: I think most people think it’s cool but the barrier to starting your own experiments is high. The main insight from users is that I should probably make it even easier to figure out how to use Quantified Mind to quickly get benefits. I want to add more content like suggested experiments, documentation of what other people did and what they learned, and the science behind all of it, and most of these ideas came from users. Aside from that, there are many features to add such as better UI, more tests (I am working on mood detection now), better tools to access and analyze data.

At a higher level, I want to go forward and develop a science of cognitive optimization. There are many interventions to test and I want to study as many of them as possible using rigorous controlled studies and publish the results. It’s time for cognitive improvement to take a step forward from being astrology-like to being a proper science.

Q: Anything else you’d like to say?

Donner: Thanks for doing this! The QS community is wonderful and I think the future for taking care of our own health, brains and general well-being looks bright – but of course we should measure that, too.

I am always looking for people who share the vision. If you are interested in helping develop Quantified Mind further or helping run experiments, contact me (yonidonner@gmail.com).

Product: Quantified Mind

Website: www.quantified-mind.com

Platform: web

Price: free

This is the 13th post in the “Toolmaker Talks” series. The QS blog features intrepid self-quantifiers and their stories: what did they do? how did they do it? and what have they learned? In Toolmaker Talks we hear from QS enablers, those observing this QS activity and developing self-quantifying tools: what needs have they observed? what tools have they developed in response? and what have they learned from users’ experiences? If you are a “toolmaker” and want to participate in this series, contact Rajiv Mehta at rajivzume@gmail.com.